How to Classify Your AI's Risk Level Under the EU AI Act (Art. 6)

How to Classify Your AI’s Risk Level Under the EU AI Act

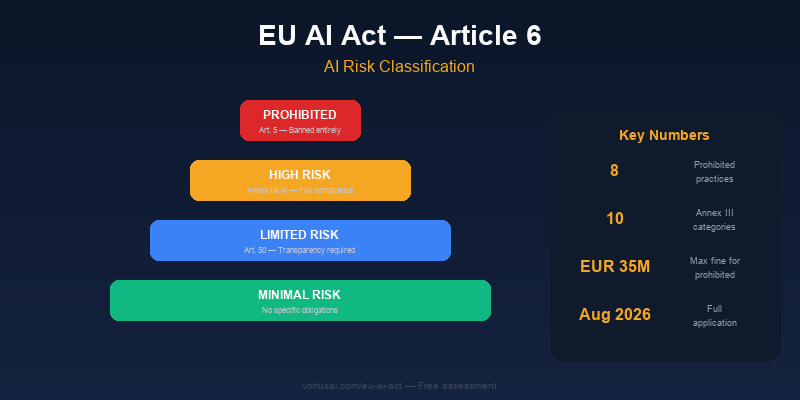

Article 6 is the cornerstone of the entire EU AI Act. It determines which category each AI system falls into and, therefore, what obligations your business has. Misclassifying can cost you up to EUR 15 million.

flowchart TD

A["Your AI System"] --> B{"Is it a prohibited\npractice?\n(Art. 5)"}

B -->|Yes| C["PROHIBITED\nFine: up to 35M EUR / 7%"]

B -->|No| D{"Safety component of\nan Annex I product?"}

D -->|Yes| E["HIGH RISK\nFull compliance required"]

D -->|No| F{"Annex III\nuse case?"}

F -->|Yes| G{"Does Art. 6(3)\nexception apply?"}

G -->|Yes| H["LIMITED RISK\nTransparency obligations"]

G -->|No| E

F -->|No| I{"Interacts with people\nor generates content?"}

I -->|Yes| H

I -->|No| J["MINIMAL RISK\nNo specific obligations"]

style C fill:#FECACA,stroke:#B91C1C

style E fill:#FEF3C7,stroke:#F5A623

style H fill:#DBEAFE,stroke:#2563EB

style J fill:#D1FAE5,stroke:#059669The 4 risk categories

Level 1: Prohibited (Art. 5)

Completely banned in the EU. Social scoring, subliminal manipulation, workplace emotion recognition, untargeted facial scraping.

Your obligation: Don’t use. If detected, stop immediately. Fine: Up to EUR 35M or 7% global turnover.

Level 2: High risk (Art. 6 + Annexes I & III)

Can be used but require full compliance: technical documentation, conformity assessment, human oversight, EU database registration. The high-risk deadline of August 2026 means businesses must act now.

Via Annex I (product safety): AI as safety component of machinery, toys, medical devices, vehicles, aviation.

Via Annex III (use case): Biometrics, critical infrastructure, education, employment, essential services, law enforcement, migration, justice.

Exception Art. 6(3): Even Annex III systems are NOT high-risk if they perform narrow procedural tasks, improve prior human work, detect patterns without replacing human judgment, AND don’t create significant risk.

Level 3: Limited risk (Art. 50)

Transparency obligations only. Inform users they’re interacting with AI, label AI-generated content.

Examples: Chatbots, content generators, virtual assistants.

Level 4: Minimal risk

No specific obligations. Free use.

Examples: Spam filters, product recommendations, text correction, internal document classification.

Quick decision tree

- Prohibited practice (Art. 5)? → YES = BANNED

- Safety component of Annex I product? → YES = HIGH RISK

- Annex III use case? → YES = check Art. 6(3) exception

- Exception applies → LIMITED RISK

- No exception → HIGH RISK

- Interacts with people or generates content? → YES = LIMITED RISK

- None of the above → MINIMAL RISK

Classification checklist

For each AI system:

- Listed in AI systems inventory

- Checked against 8 prohibited practices (Art. 5)

- Checked against Annex I products

- Checked against Annex III use cases

- Art. 6(3) exception evaluated if applicable

- Classification documented with justification

- Responsible person assigned

- Next review date set

What changes if you’re high-risk

| Requirement | What to do | Article |

|---|---|---|

| Risk management | Continuous system | Art. 9 |

| Data governance | Training data quality | Art. 10 |

| Technical documentation | Complete Annex IV docs | Art. 11 |

| Record-keeping | Automatic system logs | Art. 12 |

| Transparency | Inform deployers | Art. 13 |

| Human oversight | Override mechanism + supervisor | Art. 14 |

| Accuracy | Performance and robustness | Art. 15 |

| Conformity | Assessment before market | Art. 43 |

| Registration | EU database entry | Art. 49 |

Practical example: Classifying a customer service chatbot

Suppose your company deploys an AI chatbot on your website to answer customer questions about product availability and returns. How would you classify it?

- Prohibited (Art. 5)? No. It does not perform social scoring, subliminal manipulation, or any banned practice.

- Safety component of an Annex I product? No. It is a standalone software tool, not embedded in machinery or a medical device.

- Annex III use case? Potentially — Annex III covers “AI systems intended to interact with natural persons.” However, a standard customer support chatbot that answers FAQs does not fall under the high-risk subcategories (biometrics, critical infrastructure, employment decisions, etc.).

- Art. 6(3) exception? Even if loosely related to Annex III, the chatbot performs narrow procedural tasks (looking up order status), improves prior human work (redirecting to agents when needed), and does not create significant risk to health, safety, or fundamental rights. The exception applies.

- Interacts with people? Yes — users chat with it directly.

Classification: Limited risk (Art. 50). Your obligation is transparency: inform users they are interacting with an AI system, not a human. A simple banner such as “You are chatting with an AI assistant” satisfies this requirement.

If that same chatbot were used to screen job applicants or assess creditworthiness, the classification would jump to high risk because those fall squarely under Annex III categories (employment, essential services). Context determines classification, not the technology itself.

The local AI advantage

When AI runs on your local hardware:

- Easier classification: you control exactly what the model does

- Simpler documentation: no provider dependency

- Direct oversight: full access to model behavior

- Less regulatory risk: no third-party data transfers

Next step

Not sure where your AI falls? Take our interactive assessment:

Evaluate my EU AI Act compliance →

Request professional classification →

VORLUX AI | vorluxai.com | AI that complies with the EU AI Act by design.

Sources:

- Regulation (EU) 2024/1689, Articles 5-6

- EU AI Act Official Portal

- High-Risk AI Deadline: What August 2026 Means

- EU AI Act Compliance Guide — GDPR Register

Related reading

- The 8 Prohibited AI Practices Under the EU AI Act (With Examples)

- AESIA: What Spain’s AI Watchdog Means for Your Business

- The EU AI Act August 2026 Deadline Is 4 Months Away — Here’s Your Action Plan

Ready to Get Started?

VORLUX AI helps Spanish and European businesses deploy AI solutions that stay on your hardware, under your control. Whether you need edge AI deployment, LMS integration, or EU AI Act compliance consulting — we can help.

Book a free discovery call to discuss your AI strategy, or explore our services to see how we work.